How LLMs Are Changing Enterprise SEO Keyword Strategy

Discover how LLMs are reshaping enterprise SEO strategies—shifting focus from traditional keywords to intent-driven content and smarter search.

Large language models (LLMs) are transforming how SEO professionals approach keyword strategy.

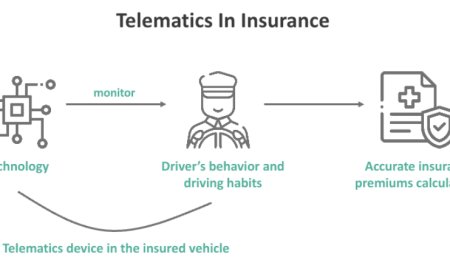

These advanced AI systems, such as GPT-4, Claude, and Gemini, leverage natural language processing (NLP) to understand user intent, process conversational queries, and deliver contextually relevant results.

This shift redefines enterprise SEO, moving it beyond traditional keyword matching to a more nuanced, intent-driven approach. Adapting to these changes is critical for SEO professionals to maintain visibility, drive organic traffic, and stay competitive in 2025.

This blog post explores how LLMs are reshaping keyword strategies, offering actionable insights and technical guidance to help enterprises thrive in this AI-driven shift.

The Shift from Keywords to Context

Traditional SEO relied heavily on keyword stuffing and exact-match phrases to rank on search engine results pages (SERPs). However, LLMs prioritize semantic understanding, focusing on the intent behind a query rather than just the words used.

According to Botify, 37% of users now find AI-enhanced search results more satisfying than traditional ones, signaling a shift toward conversational and context-driven searches.

This means if youre an SEO professional, you must move away from rigid keyword optimization and focus on creating content that aligns with user intent.

For instance, instead of targeting a single keyword, LLMs analyze related terms, synonyms, and the broader context of a query, such as how to optimize enterprise websites for AI search.

This shift demands that an expert adopt semantic search strategies, incorporating long-tail keywords, question-based phrases, and topic clusters to cover a subject comprehensively. You can either do all of these yourself or bring in anenterprise SEO expertto help you with your cause, which will make things easier for you. They conduct semantic keyword research using tools like Surfer SEO or Ahrefs to identify related terms and questions users ask.

They also analyze People Also Ask sections on Google to uncover conversational queries like What does an enterprise SEO expert do? and integrate them into your content.

Optimizing for Conversational Queries

With the rise of voice search and AI assistants like Siri and Googles AI Overviews, users are increasingly asking questions in natural, conversational language.

LLMs excel at interpreting these queries, making it essential for SEO professionals to optimize for conversational keywords.

For example, a user might ask, What are the best strategies for enterprise SEO in 2025? instead of typing enterprise SEO strategies.

To optimize for conversational queries, marketers should structure content to directly answer questions. This involves using clear headings, bullet points, and Q&A formats that LLMs can easily parse.

For instance, a section titled How to Optimize for Enterprise SEO with concise, actionable answers is more likely to be cited by LLMs than a keyword-stuffed paragraph.

Actionable Step: Use tools like Frase or AnswerThePublic to identify common questions in your niche. Create content that addresses these in a conversational tone, ensuring its structured with clear headings (H2, H3) and schema markup to enhance discoverability.

Leveraging Structured Data for LLM Visibility

Structured data, such as schema markup, is critical for helping LLMs understand and index content. Structured data acts as a roadmap for AI systems, providing explicit clues about a pages content.

Implementing schema like FAQPage, HowTo, or Article can improve the chances of content being featured in AI-generated responses.

For example, a page about professional SEO services can use schema to highlight key services, pricing, or FAQs, making it easier for LLMs to extract and present this information. This is particularly important for zero-click searches, where LLMs provide direct answers without directing users to a website.

Actionable Step: Implement schema markup using tools like Merkles Schema Markup Generator. Focus on structured data types relevant to your content, such as Product, Service, or Organization schema, to enhance LLM comprehension and improve visibility in AI-driven searches.

Building Topical Authority with Content Clusters

LLMs favor content that demonstrates depth and authority on a topic. Well-organized, interlinked content is more likely to be cited by AI models.

For SEO professionals, this means creating content clustersgroups of interlinked pages that cover a topic comprehensively.

For example, a content cluster around SEO professional could include a pillar page on What Is SEO? with supporting articles on Keyword Strategies for SEO, Technical SEO for Large Websites, and Case Studies of Successful SEO Campaigns.

Internal linking between these pages signals topical relevance to LLMs and search engines alike.

Actionable Step: Map out a content cluster using tools like MarketMuse or Clearscope. Create a pillar page that broadly covers your topic and link it to 510 supporting articles, each targeting specific subtopics or long-tail keywords. Ensure internal links use descriptive anchor text to reinforce context.

Enhancing E-E-A-T for LLM Trust

Googles E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) framework remains a cornerstone of SEO, and LLMs amplify its importance. LLMs prioritize content from credible sources with demonstrated expertise.

This means showcasing author credentials, citing reputable sources, and maintaining a consistent brand voice across platforms.

For example, a blog post authored by a recognized writer with a detailed bio and links to their LinkedIn profile or past work is more likely to be trusted by LLMs. Additionally, backlinks from authoritative domains in your niche enhance your contents credibility.

Actionable Step: Include author bios with credentials and link to reputable sources like industry studies or government websites. Use tools like Moz or SEMrush to analyze your backlink profile and pursue high-quality, contextually relevant backlinks.

Monitoring and Adapting to LLM Traffic

LLMs are driving a surge in referral traffic, with Search Engine Land reporting an 800% increase in LLM referral traffic between Q3 and Q4 of 2024.

Marketers must monitor this traffic to understand how LLMs are citing their content. Tools like Google Analytics 4 can track LLM referrals by setting up custom segments for AI-driven sources like ChatGPT or Perplexity.

Regularly updating content is also critical, as LLMs prioritize fresh, relevant information, refreshing content with new statistics, insights, or case studies to maintain visibility.

Actionable Step: Set up a Google Analytics 4 report to track LLM referral traffic using regex formulas to identify AI sources. Schedule quarterly content audits to update outdated information, add new data, and optimize for emerging trends.

Conclusion

Large language models are reshaping keyword strategy for enterprise SEO by prioritizing user intent, conversational queries, and topical authority.

Enterprise SEO experts must adapt by focusing on semantic search, structured data, content clusters, and E-E-A-T principles while monitoring LLM-driven traffic.

By implementing these strategies, enterprises can enhance visibility, drive organic growth, and stay ahead in the AI-powered search landscape of 2025.

For those seeking practical support in navigating this shift, insights drawn from working with experienced SEO agencies like ResultFirst continue to inform how brands evolve with the changing SERP landscape.